Photo via Pexels

Neuromorphic accelerators with dynamic sparse activation are specialized hardware designed to efficiently process deep learning models by exploiting the inherent sparsity in neural network activations. Instead of processing all neurons and weights, these chips only activate and compute for the non-zero (or significantly non-zero) activations, mimicking the event-driven nature of biological brains for energy savings. Companies like Cerebras Systems, Mythic, and startups like BrainChip are developing architectures that incorporate principles of sparsity and in-memory compute for deep learning. These accelerators are in early commercialization, primarily targeting data center and edge AI inference. In December 2023, BrainChip announced its Akida neuromorphic processor achieving significant energy efficiency gains (up to 10x) for specific deep learning workloads compared to GPUs by leveraging event-based processing, contrasting with dense matrix operations common in general-purpose GPUs.

Why It Matters

Deep learning models are becoming increasingly massive, leading to prohibitive energy consumption (e.g., GPT-3 training costs millions of dollars in electricity) and latency, particularly for inference at the edge or in real-time applications. Accelerators leveraging dynamic sparse activation can drastically reduce the energy footprint and increase the speed of AI inference by only performing necessary computations, making advanced AI feasible for ubiquitous deployment in IoT, smart cameras, and autonomous systems, potentially saving billions in operational costs. AI chip startups focused on efficiency and specialized AI hardware vendors stand to benefit significantly, while traditional CPU/GPU manufacturers face competition in the lucrative AI inference market. Technical barriers include developing efficient hardware for dynamic sparsity detection and routing, and creating compilers that can effectively map dense deep learning models onto sparse architectures. Widespread adoption in specialized edge AI applications is projected within 3-8 years, with the US and China leading the race in AI chip development. A second-order consequence could be a shift towards developing 'sparse-first' AI algorithms that are inherently more efficient and require less data, leading to a new wave of AI research.

Development Stage

Related

Humpback Whales Adapt Song Structure Rapidly in Response to Environmental Changes

Researchers from the University of St Andrews and the Hawaii Institute of Marine Biology have discovered that humpback whales (Megaptera novaeangliae) can…

Rive

Rive is a powerful design and animation tool for creating interactive animations that run in real-time across web, mobile, and game engines. Developed by Rive…

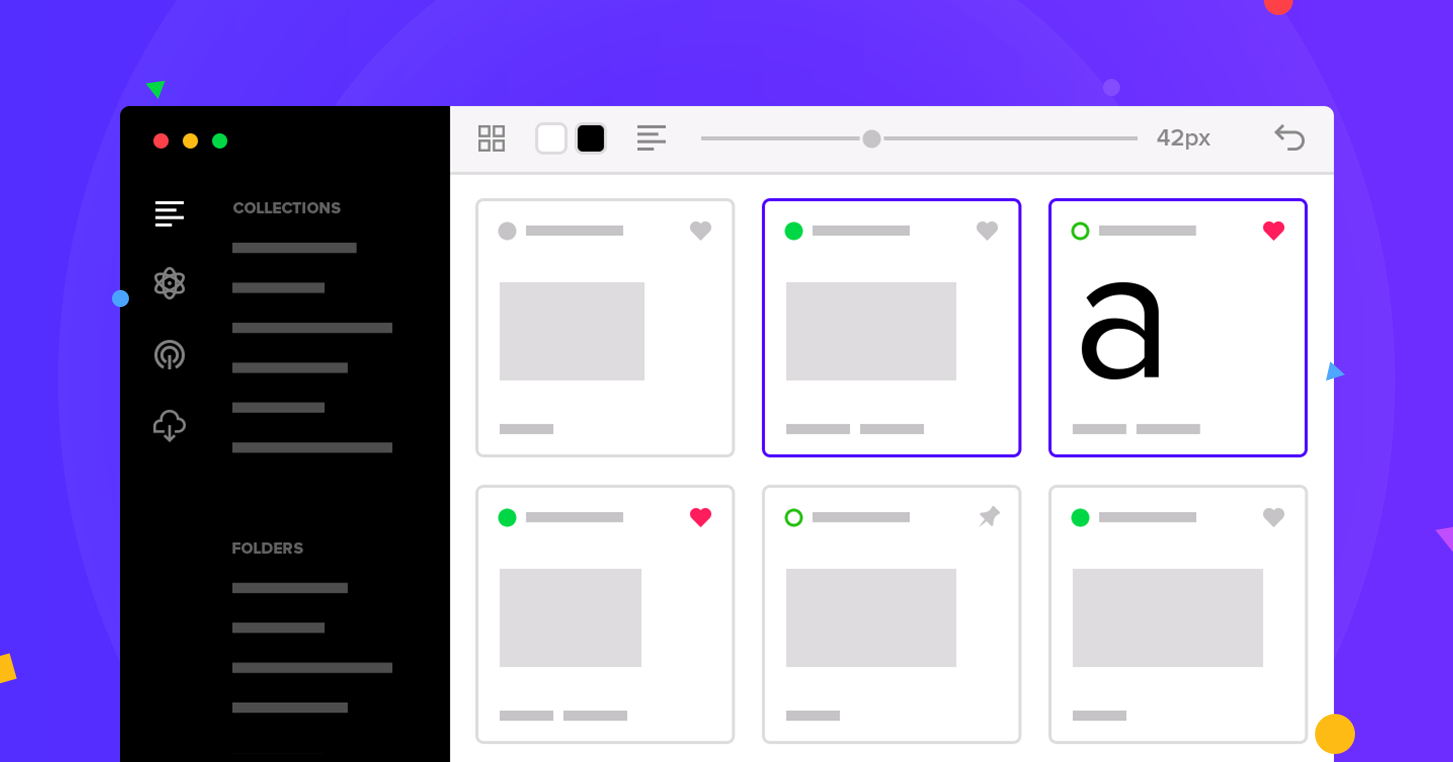

FontBase

FontBase is a lightning-fast, free font manager developed by Dominik Schilling, designed to help creatives organize, preview, and activate fonts effortlessly…

LEGO Botanical Collection Orchid

The LEGO Botanical Collection Orchid (10311) invites adults to a mindful building experience with its intricate 608-piece model of a vibrant orchid. Designed…

More from Future Radar

View all →

Mozilla's Opposition to Chrome's Prompt API

Read →

OpenAI's 'Goblins' - Novel AI Training Method

Read →

Zig Project's Anti-AI Contribution Policy

Read →

Granite 4.1 - IBM's 8B Model Matching 32B MoE

Read →

Federation of Forges

Read →

Ghostty Terminal Emulator

Read →

Mozilla's Opposition to Chrome's Prompt API

Read →

OpenAI's 'Goblins' - Novel AI Training Method

Read →

Zig Project's Anti-AI Contribution Policy

Read →

Granite 4.1 - IBM's 8B Model Matching 32B MoE

Read →

Federation of Forges

Read →

Ghostty Terminal Emulator

Read →Enjoyed this? Get five picks like this every morning.

Free daily newsletter — zero spam, unsubscribe anytime.