Photo via Pexels

Light field rendering chips are specialized processors designed to efficiently compute and display light field data, which captures not just the color and intensity of light but also its direction from every point in space. Companies like NVIDIA, Intel, and startups such as Lytro (though pivoted from hardware) have invested in the underlying principles, with university research groups at Stanford and MIT exploring new architectural designs. This technology is in the advanced research and early prototype phase, with breakthroughs in algorithms and hardware enabling more compact and powerful light field processing. In March 2023, researchers at the University of California, Berkeley, unveiled a prototype chip architecture demonstrating real-time rendering of complex light fields with significantly reduced computational overhead compared to traditional methods, achieving frame rates previously thought impossible on mobile hardware. These chips aim to overcome the vergence-accommodation conflict inherent in current stereoscopic AR displays, which causes eye strain and nausea by presenting 2D images at a fixed focal depth.

Why It Matters

The vergence-accommodation conflict is a major physiological barrier preventing comfortable, prolonged use of AR/VR headsets, limiting the addressable market for a comfortable spatial computing experience worth hundreds of billions. Light field rendering chips would enable AR glasses to display truly 3D images with accurate depth cues, eliminating eye strain and making virtual objects appear indistinguishable from real ones. Consumers would experience unprecedented comfort and immersion, while developers could create more realistic content. Technical hurdles include managing the immense data throughput required for light field rendering and achieving power efficiency in a mobile form factor. We might see these chips in high-end AR/VR devices within 8-12 years. NVIDIA and Google are actively pushing the boundaries of real-time 3D rendering and light field capture. A second-order effect could be a fundamental shift in how 3D content is created and distributed, moving from polygon meshes to light field representations.

Development Stage

Related

JWST Reveals Early Galaxies Formed Faster Than Predicted After Big Bang

Observations from the James Webb Space Telescope (JWST) have uncovered numerous large, mature galaxies that formed surprisingly quickly just a few hundred…

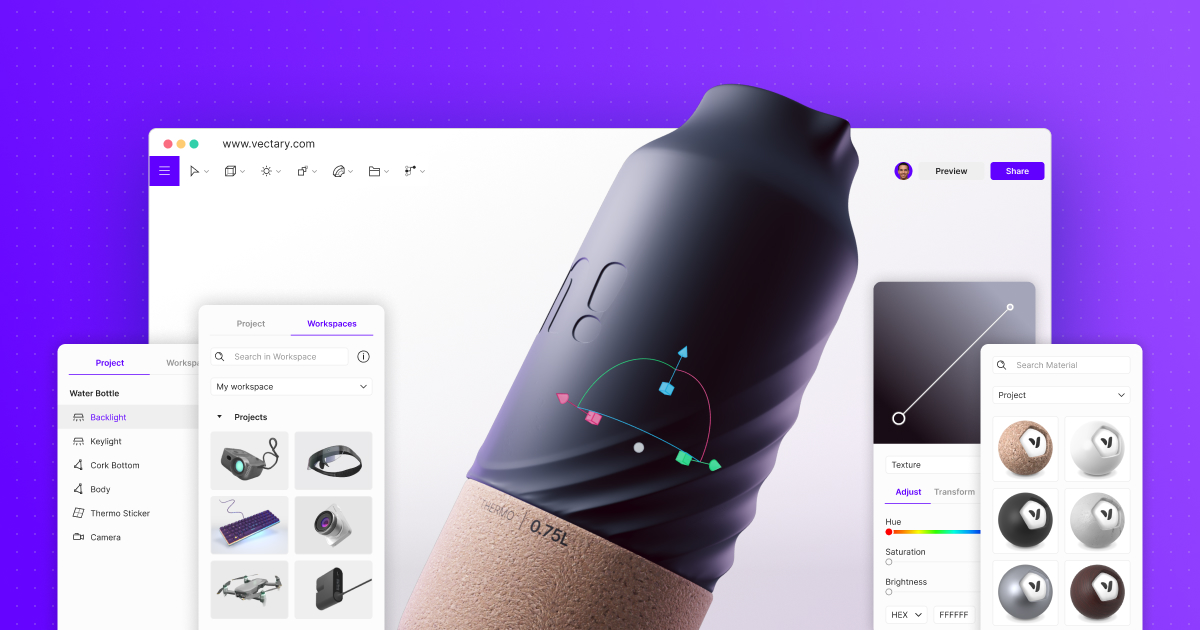

Vectary

Vectary is an online 3D design tool and 3D asset library created by Vectary a.s., enabling users to create stunning 3D models, mockups, and augmented reality…

Philips Hue Play Gradient Lightstrip 65 inch

The Philips Hue Play Gradient Lightstrip brings immersive smart lighting to your entertainment setup, syncing with your screen content for a dynamic ambient…

Connected Papers

Connected Papers is a unique web application created by a small startup to help researchers find and explore academic papers through a visual interface. Its…

More from Future Radar

View all →

Mozilla's Opposition to Chrome's Prompt API

Read →

OpenAI's 'Goblins' - Novel AI Training Method

Read →

Zig Project's Anti-AI Contribution Policy

Read →

Granite 4.1 - IBM's 8B Model Matching 32B MoE

Read →

Federation of Forges

Read →

Ghostty Terminal Emulator

Read →

Mozilla's Opposition to Chrome's Prompt API

Read →

OpenAI's 'Goblins' - Novel AI Training Method

Read →

Zig Project's Anti-AI Contribution Policy

Read →

Granite 4.1 - IBM's 8B Model Matching 32B MoE

Read →

Federation of Forges

Read →

Ghostty Terminal Emulator

Read →Enjoyed this? Get five picks like this every morning.

Free daily newsletter — zero spam, unsubscribe anytime.